How I built a dev server that runs on half a lightbulb

A $1/month dev server you can control from your phone. Here’s how I built a Raspberry Pi–powered system to scrape, curate, and publish a fully automated newsletter.

In my last post, I gave you a sneak peek into how I build and deploy the bay.dance dancer newsletter from my phone. Today, we'll deep dive into every aspect of the setup - hardware, software, networking, scraping, and publishing. (TL;DR: Raspberry Pi, Claude Code Remote Control, Tailscale, Telegram, agent-browser, cron, and Django).

At the end of this, you'll have the knowledge to build your own dev server and personal AI assistant. It's a great way to build out the pieces that form a system similar to OpenClaw, while being customized to your needs. And the best part is, you can do it all without shelling out $500+ (!) for a freaking Mac Mini.

Why create bay.dance?

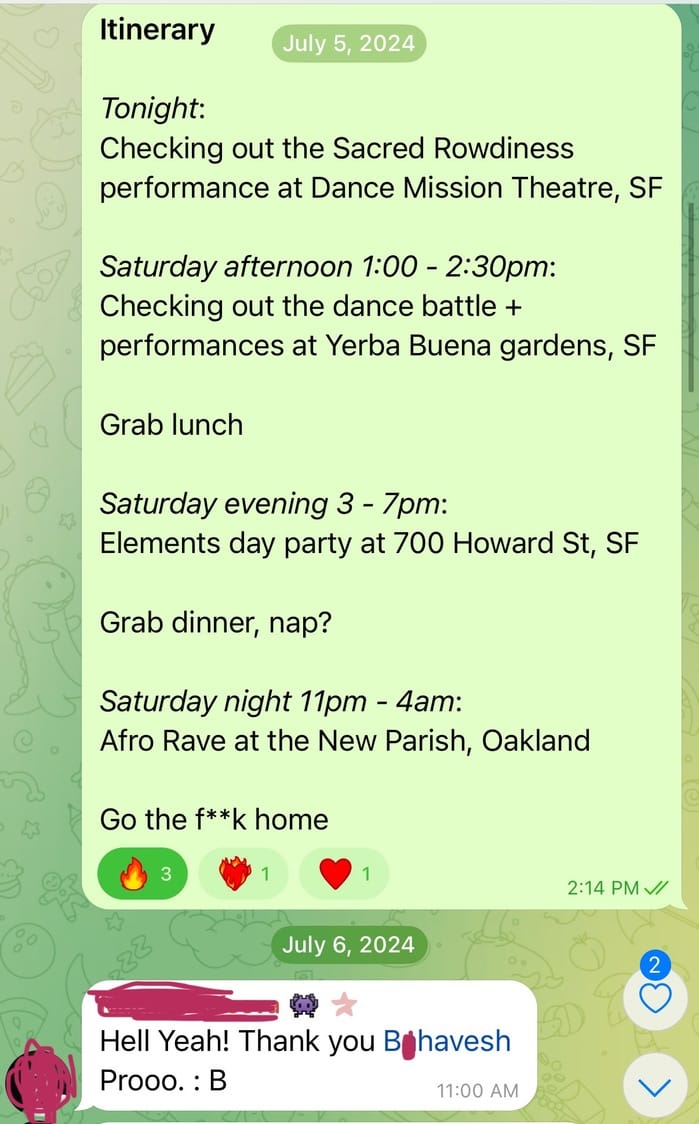

In the beginning, there was chaos - dancers all over the bay area were scattered among a mishmash of group chats, email lists, calendars, instagrams, partifuls, lumas, and other apps with silly startup names. All they really wanted to know was: "Where are the dance events going to be this weekend?"

To solve this, I accidentally started a newsletter in the summer of 2024. I honestly just wanted my dance classmates to join me at the amazing House community events that I danced at. So I wrote an "itinerary" for that weekend and sent it out to my classmates...and the rest is history.

Since then, I've been addicted to curating and publishing Bay Area dance events. I've sent out a newsletter every single week for the past 1.75 years, no exceptions. But I don't do it manually anymore like I did in the beginning - I've automated the tedium of scraping flyers, ingesting event details, constructing the newsletter, and running the website. Here's how I do it.

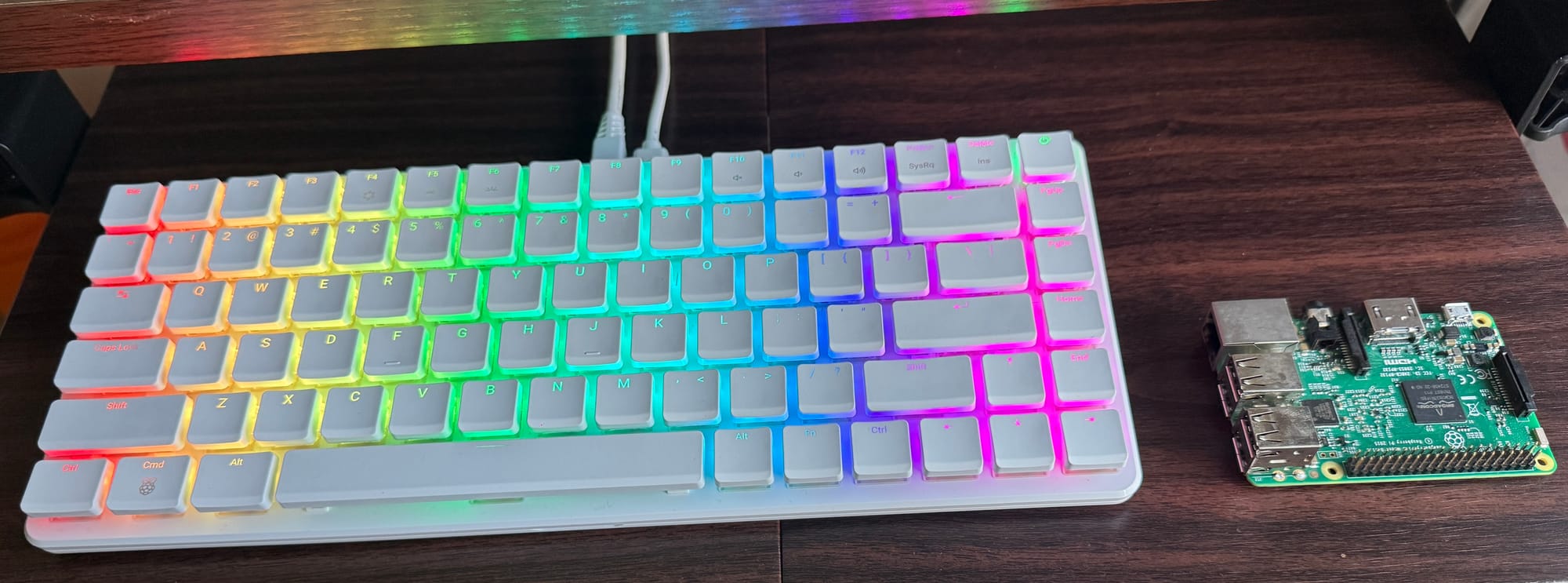

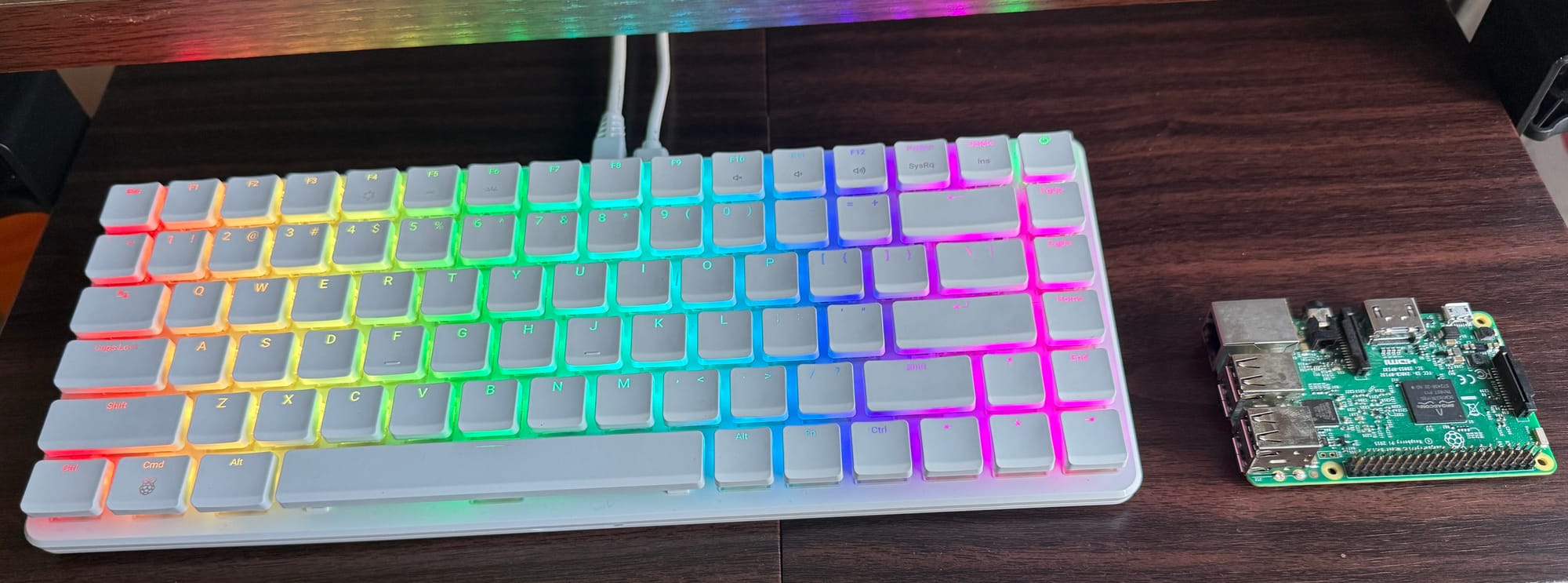

Hardware: a delicious Raspberry Pi

The Raspberry Pi is ideal for a dev server, because:

Linux

The Pi runs Linux, which is the same OS as the production server. This minimizes "it works on my machine" disparity issues between dev and prod.

Power

It sips on very little power during idle, in the range of 2.7 - 3.3W. This is less than half of the power usage of an energy efficient LED lightbulb (or ~500x less than a microwave, while we're comparing). This means I can keep it always-on for ~$1 per month in electricity costs.

Price

The Pi is cheap! Cheap cheap cheap 🐣 If you're a hardware hacker, you probably have one lying around for free. Otherwise, you can buy a Pi 4 for $35 or a Pi 5 for $45.

You might be wondering - why not just rent a VPS (virtual private server) in the cloud instead of buying a physical server? There are two important reasons, which I mention in the Networking and Scraping sections below. But first, let's talk about the software.

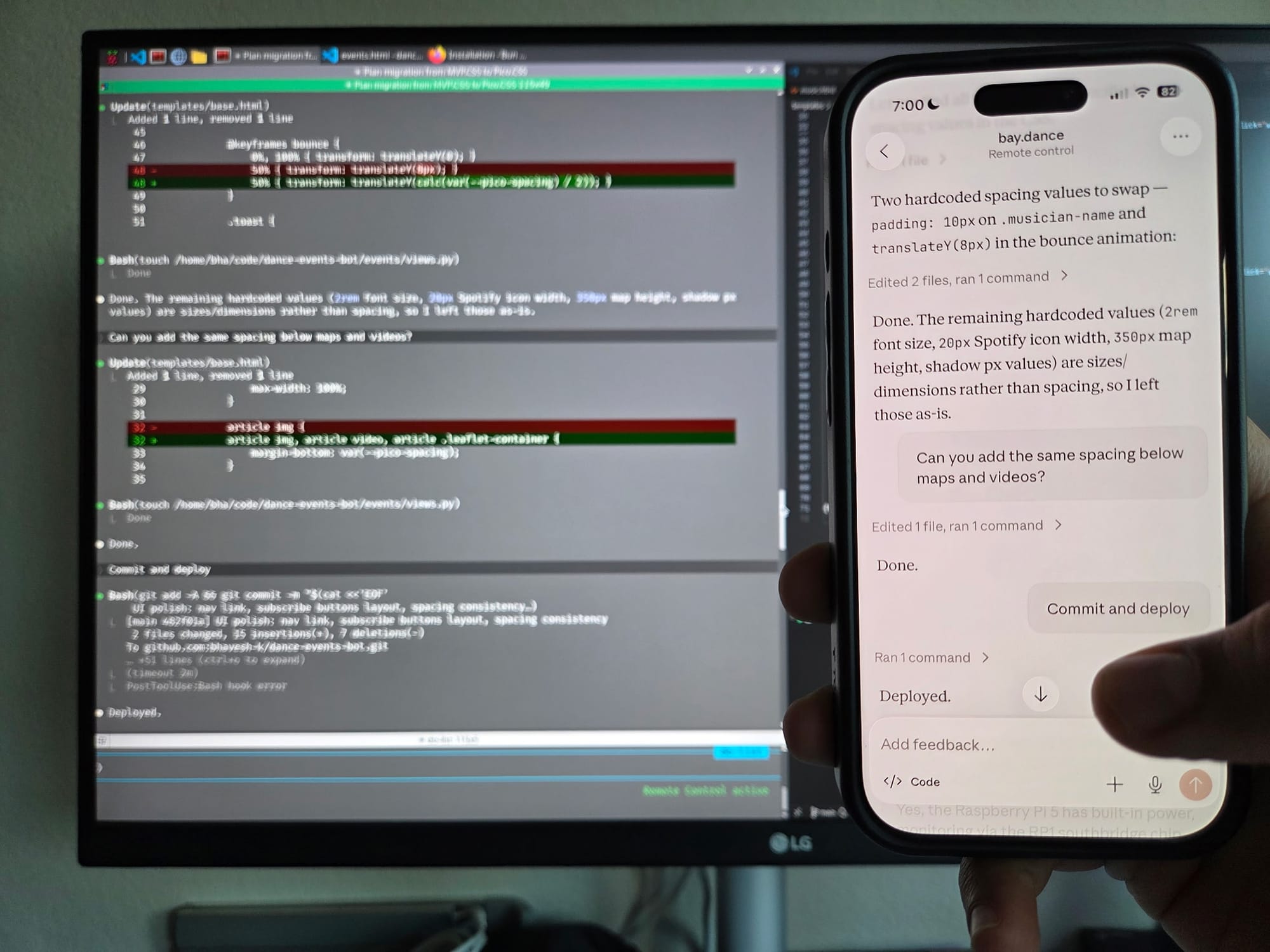

Software: the rocket in my pocket

Every software dev already knows that Claude Code (CC) is the best coding agent, so I won't belabor the point. But what you might not know is that you can use it from your phone without needing OpenClaw.

It's called Remote Control, and it allows you to use your Claude monthly plan instead of having to pay API prices. This is a huge advantage over OpenClaw, as it ensures you get a fixed-price bill (say, $20) every month instead of worrying about API fees jacking up your costs by using uncached tokens on every request.

Another major advantage is, Remote Control has a built-in plan viewer and diff viewer. So I'm able to iterate on plans and check the agent's work, just like I'm used to doing on desktop. Honestly, it's more fun than using CC on desktop because I can talk to it via voice-to-text while I'm on the move. I've "written" way more features and bugfixes this way than I ever did sitting in a chair 🤩

To enable Remote Control, just type /remote-control into your CC session.

CC doesn't just build features, though - it also manages the local web server so I can preview new features remotely from my phone. For security reasons, only I have remote access to the local web server. I do this using Tailscale, as explained below.

Networking: hiding my tail(scale) between my legs

I mentioned earlier that I'd explain why I run a physical server instead of a VPS. Here's the first reason: security! When you rent a VPS, it has a publicly visible IP address. This attracts hacker bots, which constantly probe your server for vulnerabilities and try to gain access to the box. It is possible to secure the box against most attacks, but it takes a lot of complicated sysadmin work.

I prefer to just cut the proverbial Gordian knot, i.e. to skip the VPS in favor of using Tailscale. This has the security benefit of creating a mesh VPN between my phone and Pi, so that no one else can access or even see my Pi on the internet. The only way to access it is to be on the same private network. I am so confident in this that my GIF below literally shows you the tailnet addresses of my phone and Pi...you won't be able to access either of them.

Security is important, but it's not the only benefit of running a home server. The other benefit is having a residential IP address for scraping.

Scraping: flawless and clawless

Why should you not use a VPS for web scraping? Because most VPSs have at some point been used to host DDoS attacks, spam, or malicious bots. Hence, their IP addresses are considered "dirty" i.e. blacklisted by all anti-bot software. Web browsing or scraping from a VPS is almost certainly going to get you bot-kicked from most websites.

My Pi, on the other hand, is at my apartment. It's on a residential IP address, which has a much better reputation and hence a higher chance of passing through bot filters undetected. This is not foolproof though - if you scrape large quantities of webpages too frequently, there is still a chance of getting bot-kicked. To avoid these risks, I do the following:

- Program my scraper to wait a long time (random number between 30-60 seconds) between scraping pages, and

- Use a throwaway account for scraping instead of my actual account

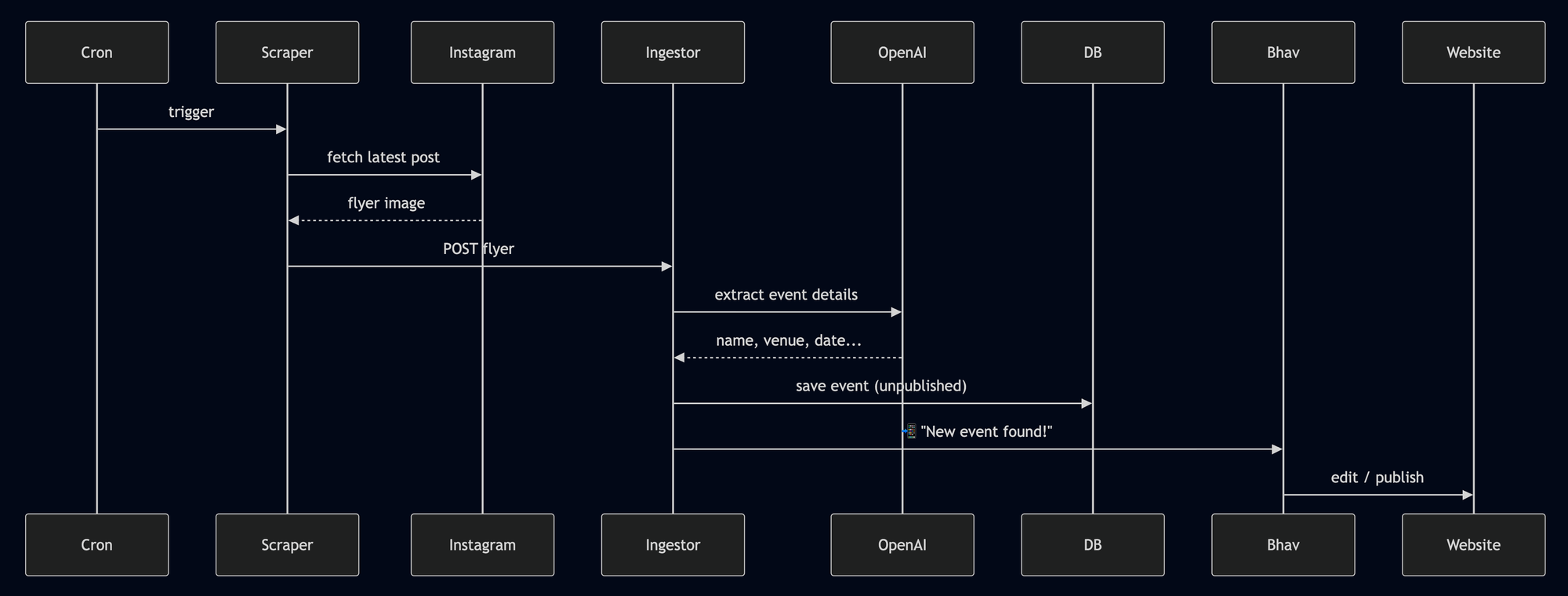

With those caveats aside, here is my scraping setup: I use the agent-browser CLI, which is designed for use by agents and scripts. I asked CC to set up a cron job to scrape flyers off a list of my favorite dancer/DJ Instagram pages everyday. It was able to play with agent-browser until it got the flyers I wanted. But it didn't stop there.

CC then exported its process (loop through the IG pages in a browser, check latest post for a new flyer, submit the flyer to bay.dance backend) into a standalone bash script. The cron job runs this bash script everyday, which makes the flyer output deterministic as it doesn't rely on an LLM anymore.

Publishing: everybody dance now!

Finally, the part where my human qualities shine - curating the best dance events from a sea of noise. What makes a dance event the best, you ask? It's simple, you silly goose: if there are a lot of people dancing and they're connected to the music, then it's the best!

if people_dancing/total_people > 0.25 and is_connected(music, people_dancing):

add_event_to_newsletter()

else:

passMy absurd attempt at encoding embodied human intuition

As you can see, a computer algorithm just won't cut it. So I haven't (and likely never will) automate the event curation part. We humans gotta be good for something in this new world!

That being said, there is a lot of tedium around publishing which I have automated. The backend is written in Python, and consists of two components:

- Web server using Django, and

- Telegram chatbot using python-telegram-bot.

Django gives me a flexible full-stack webapp, mostly used by me. Whereas Telegram is the "frontend" that most users actually use. A part of me is sad that the open web has been shafted like this 😿 But I gotta meet my users where they're at, and by a wide margin they're on the apps.

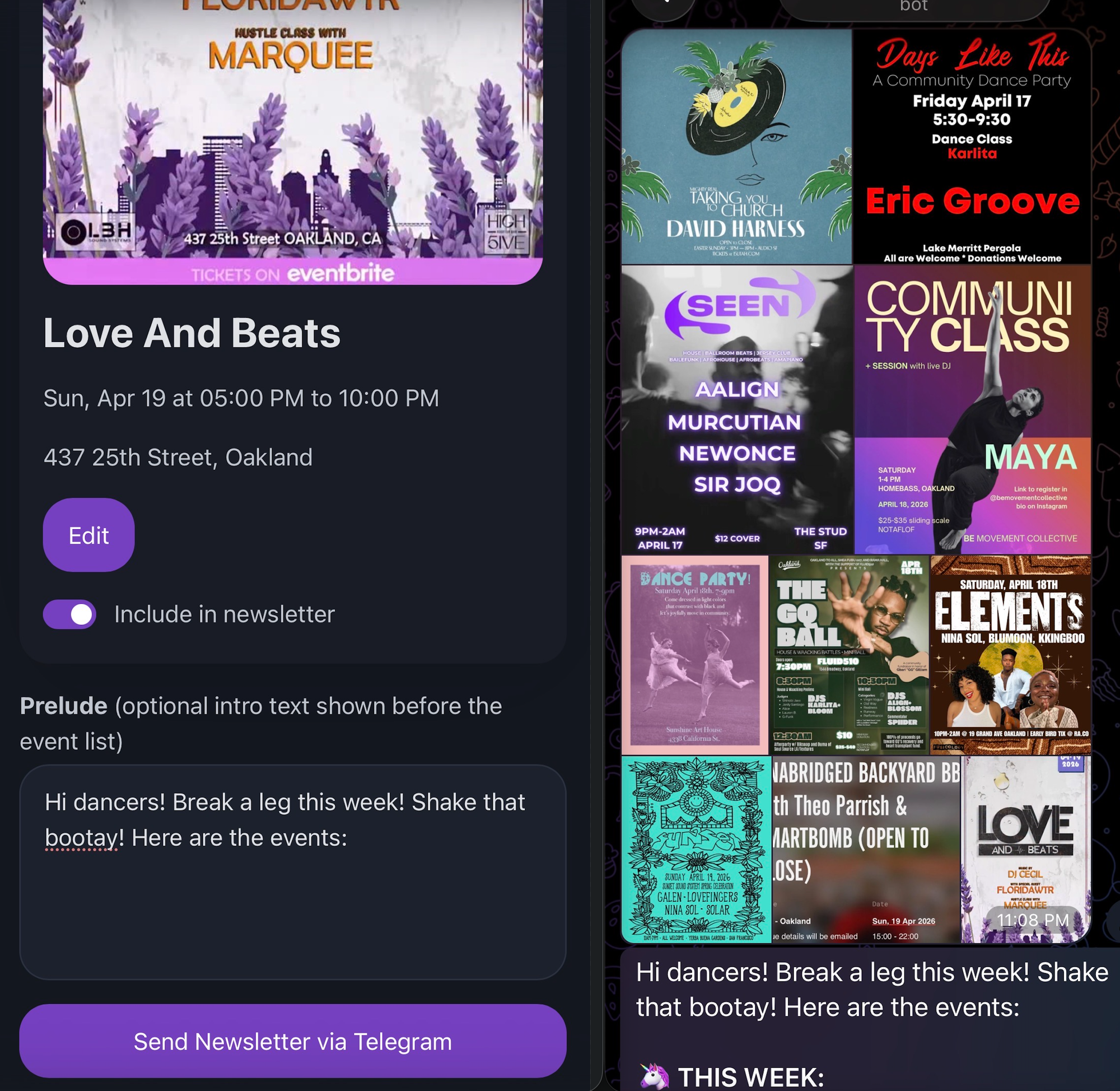

For the event curator (me), I created a newsletter authoring admin page which uses OAuth login via the django-allauth library. Its UI pulls 2 weeks of events from the database and displays them to me. Each event has a checkbox, allowing me to include/exclude it from the current week's newsletter. When I submit this form, I get a beautifully formatted newsletter message via Telegram which I can forward to my subscribers 🤩

And that's how the proverbial sausage is made 🌭

Future ideas

After building this whole setup so I can do event wizardry from my phone, I have a couple of interesting directions to explore and grow the technology:

- This can turn into "Substack for Whatsapp", i.e. sending newsletters via chat apps instead of via email. I believe group chats are better for reaching people than email is - the images land in their photo gallery, and they get a ping when I send out the message. By contrast, everyone ignores most emails.

- I am very interested in keeping AI agents busy without taking over my attention span throughout the day. Currently, I keep a todo list of cool ideas on my phone and pull in one task at a time into CC Remote Control. I want to build a way for CC to have direct access to my todo list, and to loop through the ideas and just keep building them in the background 😮

If this post sparked any ideas for you, I'd love to hear them!

Gimme more!

If you learned something cool today and would like me to email you the next time I write a tutorial or deep dive, just hit Follow/Subscribe below. I'll deliver it to your inbox, hot off the press. I only post high quality write-ups every 2-4 weeks, so don't stress about getting spammed.

Hope you enjoyed this deep dive into my development setup! We are in a fun computing era where we can experiment with wild ideas and delegate the tedious work to AI. I know for a fact that I wouldn't have been able to create bay.dance in my free time, if it weren't for LLM-assisted and agentic coding. I am excited for all the projects we're gonna build together this year 🤩 Ciao!